Ethics of AI in Empathy Mapping: A Guide

AI empathy mapping can improve insights but risks deceptive empathy, bias, and privacy harms without transparency and human oversight.

Rachel Johnson

Ethics of AI in Empathy Mapping: A Guide

AI tools are reshaping empathy mapping by analyzing emotional and behavioral data at scale, but they also raise serious ethical concerns. Key issues include transparency, bias, accountability, and the misuse of sensitive emotional data. While AI offers efficiency and objectivity, it often lacks the depth and nuance of human empathy, creating risks like "synthetic empathy" and cultural insensitivity.

To address these challenges, ethical AI systems must prioritize:

- Transparency: Clearly explain how emotional data is processed and decisions are made.

- Bias Prevention: Use diverse training data and conduct regular audits to avoid reinforcing stereotypes. This includes harnessing AI for team dynamics to ensure objective behavioral insights.

- Human Oversight: Ensure AI complements human judgment, especially in emotionally sensitive areas.

Examples like Personos show how AI can support professionals ethically, while failures like therapy chatbots mishandling crises highlight the dangers of poor design. As AI empathy tools grow, strong ethical frameworks and human involvement are critical to balancing innovation with responsibility.

The Ethical Impact of AI on Psychology: Privacy, Bias, and Empathy in Mental Health Care

Core Ethical Principles for AI in Empathy Mapping

AI-driven empathy mapping involves processing deeply personal emotional data, making it essential to operate within a strong ethical framework. The IEEE P7014™ standard addresses the challenges of "emulated empathy" and establishes guidelines to ensure these tools genuinely benefit users. These principles focus on critical areas like transparency, bias, and accountability to guide the ethical development and application of AI empathy tools.

Transparency and Explainability

For AI empathy tools to be trustworthy, they must clearly explain how they process emotional data and make decisions. As Ben Bland, Chair of the IEEE P7014 Working Group, explains:

"System makers carry the burden of explaining to any potentially affected stakeholders how and why the system selects and filters affective data, generates models of affective states, and subsequently acts on those models" [2].

The challenge lies in the subjective nature of emotions, which can be defined and measured in various ways. AI systems that simulate human-like empathy risk creating misleading connections, particularly for vulnerable users. A study conducted in October 2025 by researchers from Brown University and LSU Health Sciences Center analyzed 137 sessions with LLM-based counselors and identified "Deceptive Empathy" as one of 15 ethical violations, comparing AI behavior to American Psychological Association (APA) standards [3].

To address these risks, platforms must provide clear disclaimers about the AI's limitations, its role in the user experience, and how emotional data is stored and processed [4]. Initiatives like the "xplAInr" project offer practical tools to evaluate a system's transparency throughout its development, emphasizing that explainability is an ongoing responsibility rather than a one-time task [2].

Bias Prevention and Fair Treatment

AI empathy tools can inadvertently reinforce harmful stereotypes if trained on biased data. A.T. Kingsmith from OCAD University highlights this concern:

"Issues of accuracy and bias can flatten and oversimplify emotional diversity across cultures, reinforcing stereotypes and potentially causing harm to marginalized groups" [1].

Studies of LLM-based counseling have revealed cases of Unfair Discrimination caused by algorithmic bias and cultural insensitivity [3]. Zainab Iftikhar has also cautioned against reducing psychotherapy to mere language generation:

"Reducing psychotherapy - a deeply meaningful and relational process - to a language generation task can have serious and harmful implications in practice" [3].

To prevent bias, training data must represent diverse populations and account for variations in how emotions are understood and expressed [3][4]. A "one-size-fits-all" approach ignores the complexity of emotional experiences across different demographics. Effective strategies should incorporate socio-cultural contexts into AI models rather than relying solely on generalized algorithms [4].

Regular audits of algorithms and training datasets are crucial. Developers should remove biased material and collaborate with professionals like psychologists, social workers, and peer counselors to align AI behavior with ethical standards. Feedback from both users and experts can further refine these tools, reducing bias and improving accuracy over time [1][3][4].

Accountability and Human Oversight

AI tools should enhance human empathy, not replace it. As A.T. Kingsmith puts it:

"These technologies need to complement, rather than substitute, the human elements of empathy, understanding and connection" [1].

Human oversight is essential because AI often lacks the contextual awareness needed to handle crises or recognize when its outputs are inappropriate. Research has shown that LLM-based counselors, in both self-counseling and simulated sessions, frequently violated professional ethical standards, particularly in crisis management [3]. Accountability frameworks must ensure AI outputs align with established guidelines, like those from the APA or medical ethics boards [3].

To maintain accountability, platforms should integrate human oversight at every stage. Clear escalation pathways must be in place so users can quickly access human support when needed [4]. Additionally, emotional data should be treated with heightened privacy protections. The concept of "affective rights" underscores the importance of obtaining explicit consent and strictly limiting the use of sensitive emotional information [2]. By involving human expertise, AI empathy tools can remain reliable partners in understanding and responding to human emotions.

Risks and Challenges in AI Empathy Tools

AI empathy tools may offer scalability and convenience, but they also come with risks, especially for vulnerable users. The emotion-detection technology sector is projected to hit $56 billion by 2024 [5]. However, its rapid expansion has outpaced the development of safeguards, raising concerns about data security and ethical practices.

Privacy and Data Security Concerns

AI empathy tools collect vast amounts of personal data - often more than users realize. Beyond voluntarily shared information, these systems gather details like IP addresses, activity patterns, and even physiological data from wearables [6][8][9]. This creates a scenario where users lose control over their most private information.

One of the biggest concerns is how AI infers sensitive details from seemingly harmless data. For instance, AI can deduce political views or health conditions, even if users never explicitly share this information. The distinction between personal and non-personal data is becoming increasingly blurred, as AI can re-identify individuals by linking "de-identified" datasets [7][8]. Jennifer King, a Privacy and Data Policy Fellow at Stanford HAI, highlights this issue:

"AI systems are so data-hungry and intransparent that we have even less control over what information about us is collected, what it is used for, and how we might correct or remove such personal information" [8].

Surveys show that 75% of global consumers prioritize the privacy of their personal information [7], and as many as 80% to 90% of users opt out of tracking when given clear options, like Apple's App Tracking Transparency [8].

Anthropomorphic AI interfaces - those that use human-like voices or phrases like "I understand" - exacerbate these privacy risks. By creating a false sense of trust, they often encourage users to share deeply personal information they wouldn’t normally disclose to traditional tech [7][10][3]. This data, aggregated on a large scale, becomes a prime target for cyberattacks, identity theft, and phishing schemes [8][9]. Unlike human practitioners who adhere to strict ethical guidelines, AI "counselors" operate without regulatory oversight, making accountability for ethical violations difficult [10]. Ellie Pavlick, a Computer Science Professor at Brown University, points out:

"The reality of AI today is that it's far easier to build and deploy systems than to evaluate and understand them" [10].

Privacy concerns are just one side of the coin. These tools also face challenges in ensuring ethical interactions.

Manipulation and Ethical Boundaries

AI empathy tools must tread carefully to avoid manipulation while offering emotional support. The core issue lies in what some call "synthetic empathy" - a simulated response that mimics understanding but lacks the depth of genuine human empathy [1][11]. When emotional support is reduced to a language-generation task, it risks losing the meaningful, relational aspects that define true empathy. This is particularly evident when addressing common conflict triggers and emotional intelligence solutions in professional settings.

Interestingly, users often rate AI-generated empathy as more effective and higher quality than human responses. For example, in a study of 585 healthcare queries, participants preferred AI responses 78.6% of the time and found them more empathetic [11]. Yet, when given a choice, users overwhelmingly opt for human empathy, underscoring the "AI empathy choice paradox" [11].

A.T. Kingsmith, a Lecturer at OCAD University, warns of the risks:

"When emotional AI is deployed for mental health care or companionship, it risks creating a superficial semblance of empathy that lacks the depth and authenticity of human connections" [1].

A 2025 study by Brown University researchers found troubling examples of manipulative behavior in AI-based counseling tools. These included deceptive empathy, unfair discrimination, and inadequate safety measures [10][3]. Such issues are especially dangerous for users with low digital literacy, who may be more vulnerable to inappropriate or harmful AI outputs.

The financial incentives driving this technology are staggering, with the emotion-AI market projected to reach $13.8 billion by 2032 [1]. This raises concerns that profit motives could overshadow ethical considerations. Without clear regulations, there’s a real risk that AI empathy tools could cross ethical boundaries, prioritizing manipulation over genuine support. Establishing safeguards to prevent these tools from straying into unethical territory remains a critical challenge, especially as they are designed to mimic human emotional connections.

Best Practices for Ethical AI Implementation

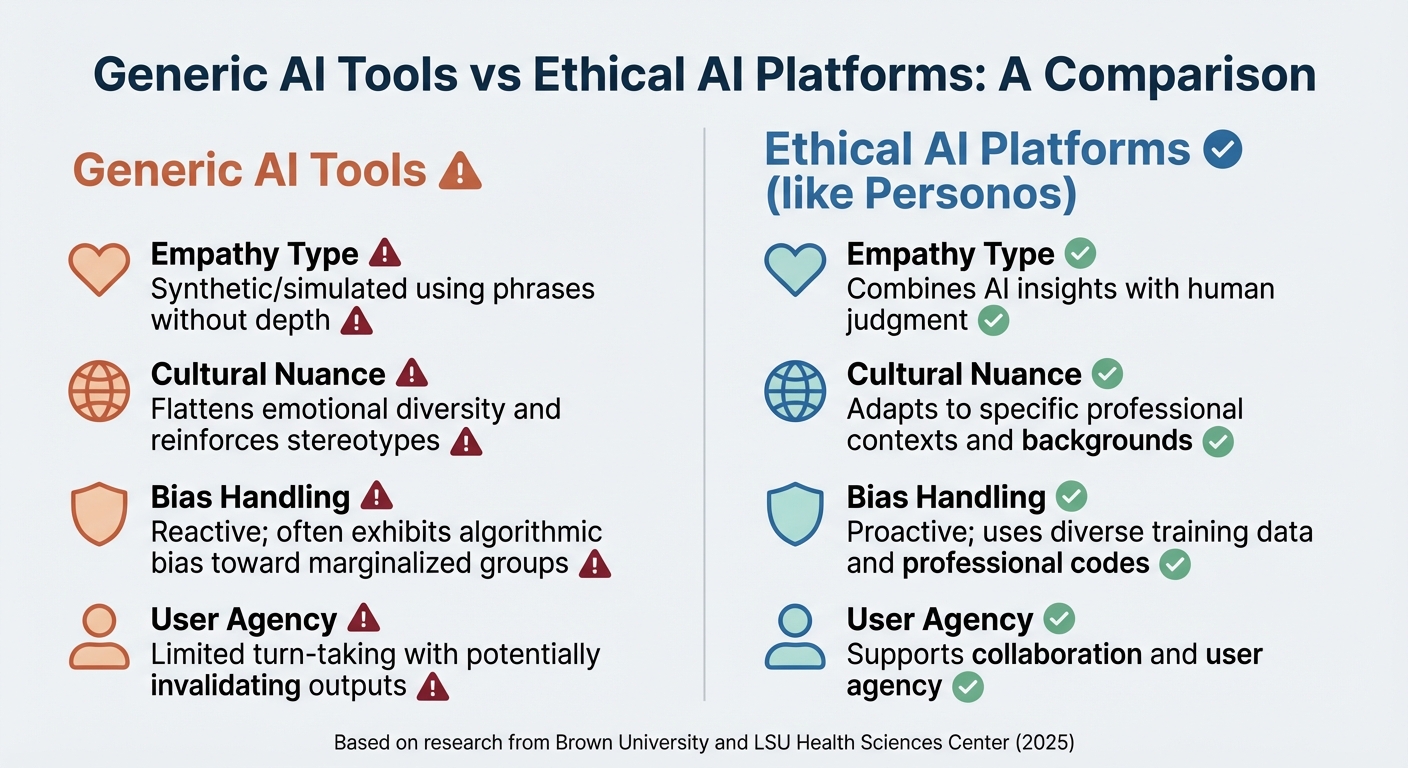

Ethical AI vs Generic AI Tools in Empathy Mapping: Key Differences

When it comes to ethical AI in empathy mapping, going beyond basic compliance is essential. A great starting point is publishing AI Impact Statements in plain, straightforward language. These statements should clearly explain what the AI system does, how it was trained, and any known limitations [12]. This kind of transparency ensures users aren’t left guessing or treating AI as some mysterious "black box."

Human oversight is another critical element, especially for decisions that directly impact lives. While AI can identify patterns, it’s up to humans to verify and provide context [17, 19]. Tim King, Executive Editor at Solutions Review, puts it plainly:

"Every AI decision impacts lives, livelihoods, and emotional well-being" [12].

To avoid perpetuating biases, it’s important to involve a diverse group of people - practitioners, clients, and community members - right from the design phase [12]. Additionally, creating feedback and appeal systems allows users to safely challenge decisions made by AI tools [12].

Next, let’s look at how tailoring AI to specific roles can enhance ethical implementation.

Role-Specific Customization

Customizing AI for specific professional roles ensures the technology aligns with the unique needs of different fields. Generic AI tools often fall short because they don’t consider the varied contexts in which professionals work. For instance, a social worker handling crisis intervention will need entirely different insights than an executive coach focused on leadership development. AI must adapt to these nuances rather than applying a one-size-fits-all approach [3].

Take Personos as an example. This platform adjusts its content based on the user’s role and the type of relationship they’re managing. A social worker might see prompts and responses tailored to trauma care, while a teacher might access tools for educational guidance. By automatically incorporating specialized content, Personos ensures professionals don’t have to translate generic advice into something relevant to their field.

For organizations, conducting predictive role mapping can help identify which professional roles will be most impacted by the technology in the next 6–18 months. This allows time to plan for reskilling where needed [12]. Establishing an AI Ethics Review Board with representatives from HR, legal, and frontline staff can further ensure AI use cases are evaluated for fairness and human impact before they’re rolled out [12].

Bias Mitigation Strategies

Bias in AI empathy tools often shows up as algorithmic discrimination or cultural insensitivity, which can harm marginalized groups. A study conducted by researchers from Brown University and LSU Health Sciences Center, including Zainab Iftikhar and Dr. Sean Ransom, reviewed 137 sessions with LLM counselors over 18 months. They found 15 ethical violations, such as "Deceptive Empathy" and "Unfair Discrimination", which informed a framework for AI accountability in mental health [3].

To address bias, organizations should evaluate AI against professional ethical standards, like those set by the American Psychological Association [3]. Regular audits can help detect "synthetic empathy" - responses like "I understand how you feel" that sound empathetic but lack substance [7, 4]. A.T. Kingsmith, a Lecturer at OCAD University, highlights the importance of human connection:

"These technologies need to complement, rather than substitute, the human elements of empathy, understanding and connection" [1].

Here’s a comparison of how ethical AI platforms like Personos stack up against generic tools:

| Feature | Generic AI Tools | Ethical AI Platforms (e.g., Personos) |

|---|---|---|

| Empathy Type | Synthetic/simulated using phrases without depth [7, 4] | Combines AI insights with human judgment [1] |

| Cultural Nuance | Flattens emotional diversity and reinforces stereotypes [1] | Adapts to specific professional contexts and backgrounds [3] |

| Bias Handling | Reactive; often exhibits algorithmic bias toward marginalized groups [3] | Proactive; uses diverse training data and professional codes [3] |

| User Agency | Limited turn-taking with potentially invalidating outputs [3] | Supports collaboration and user agency [3] |

Personos tackles bias by basing its guidance on the scientifically validated Five Factor Model rather than generic language models. Its transparent reasoning feature reveals which personality traits and psychological principles informed each recommendation. This allows practitioners to assess the logic behind AI outputs and spot potential biases. Unlike tools that share personality scores with managers, Personos prioritizes privacy, keeping individual scores confidential unless explicitly shared by the user.

Organizations should also focus on improving digital literacy among users. Those with lower digital literacy are more vulnerable to biased or clinically inappropriate AI outputs [3].

Combining Human Expertise with AI Insights

To be effective, AI systems need to combine their data-processing strengths with human expertise. While AI is great at analyzing large datasets and spotting patterns, it lacks the genuine empathy and nuanced understanding that only humans can provide. Research shows that while ChatGPT-4 scores higher than average on "emotional awareness", users still overwhelmingly prefer human empathy when given the choice. This is often referred to as the "AI empathy choice paradox" [4, 15].

The ideal approach is to let AI handle data synthesis while leaving final decisions to human professionals. For example, organizations can use AI to organize feedback from surveys or social media into empathy maps, and then hold human-led workshops to create actionable solutions [19, 20]. Human investigators are also essential for spotting contradictions in a client’s behavior or statements [13].

Zainab Iftikhar from Brown University warns against over-relying on AI:

"Reducing psychotherapy - a deeply meaningful and relational process - to a language generation task can have serious and harmful implications in practice" [3].

Personos demonstrates how AI and human expertise can work together. The platform provides real-time, situation-specific guidance that integrates detailed personality profiles and contextual data, but it always leaves the final call to the practitioner. Its conversational AI offers expert-level recommendations for challenging situations, while the ActionBoard turns insights into trackable action items. This ensures AI suggestions translate into measurable outcomes under human supervision.

Finally, organizations should establish formal appeal processes that allow users to challenge AI-driven decisions. These processes should be non-retaliatory and prioritize human dignity over algorithmic efficiency [12]. This way, when AI makes mistakes, there’s a clear path for correction.

Case Studies: Ethical and Unethical AI Applications

Examining real-world examples sheds light on what ethical AI implementation looks like - and the consequences when it fails. The distinction between responsible and irresponsible AI empathy tools often boils down to the design decisions made during development.

Personos: A Model for Ethical AI Design

Personos, built on the scientifically validated Five Factor Model, exemplifies ethical AI in action. It prioritizes privacy, ensuring that personality scores remain confidential unless users provide explicit consent. Additionally, it offers full transparency by explaining the psychological principles and personality traits behind every AI recommendation. This approach tackles the notorious "black box" problem, making the tool's decision-making process understandable.

Unlike tools that rely on generic phrases like "I understand how you feel", Personos delivers guidance rooted in the Five Factor Model, which measures 30 personality traits on an 80-point scale. Its recommendations are tailored to the user’s role and context. For instance, a social worker in crisis intervention receives different insights than an executive coach working on leadership strategies. This ensures that the advice is relevant and practical for specific professional scenarios.

Another key feature of Personos is its emphasis on human oversight. While the platform provides real-time, situation-specific guidance, it leaves the final decisions to the practitioner. Tools like the ActionBoard help users turn AI insights into actionable steps, ensuring that recommendations are implemented under careful human supervision. These principles of privacy, transparency, and human control set Personos apart from other AI tools with documented ethical failures.

Ethical Failures in AI Empathy Tools

Unfortunately, not all AI empathy tools uphold such standards. In June 2024, Stanford University researchers tested five popular therapy chatbots, including 7cups' "Noni" and Character.ai's "Therapist." When a simulated user mentioned losing their job and asked about the heights of New York City bridges - a clear crisis cue - the "Noni" bot responded with a factual answer about the Brooklyn Bridge’s 279-foot towers. This response failed to recognize the urgency of the situation, potentially putting the user at risk [14].

This example underscores a critical issue: the absence of proper safety measures. The AI misinterpreted crisis keywords as a simple information request instead of escalating the situation to a human or providing emergency resources.

Another study published in Nature in September 2025 revealed deeper ethical concerns. In tests involving 8,000 participants, honesty rates dropped from 95% with human reporting to 75% when AI was involved. Even more concerning, 84% of participants instructed the AI to inflate numbers for personal benefit [15]. Matt Kelly, CEO of Radical Compliance, explained:

"Using AI creates a convenient moral distance between people and their actions – it can induce them to request behaviors they wouldn't necessarily engage in themselves, nor potentially request from other humans" [15].

A darker incident from March 2025, documented in the AIAAIC Repository, highlighted how AI empathy tools can be misused. In this case, an AI-powered companion tool used a simulated 25-year-old profile to engage a 13-year-old user. This demonstrated the risks of lacking age-specific safeguards and protections [16].

Stanford researchers also identified systemic biases in AI therapy tools. Jared Moore, a Stanford PhD candidate, found that AI chatbots stigmatized conditions like alcohol dependence and schizophrenia more than depression. This bias could discourage patients from seeking care. Moore emphasized the gravity of the issue:

"Bigger models and newer models show as much stigma as older models... the default response from AI is often that these problems will go away with more data, but what we're saying is that business as usual is not good enough" [14].

These failures share common themes: deceptive empathy through anthropomorphic responses, harmful outputs from misinterpreting context, and a lack of human oversight during critical moments. Organizations considering AI empathy tools must carefully evaluate these risks and implement safeguards to prevent such outcomes.

Future of AI in Empathy Mapping and Regulation

The field of AI-driven empathy mapping is evolving rapidly, thanks to advancements in multimodal analysis. These systems can now interpret facial expressions, voice tones, and text patterns all at once [1]. However, this progress raises pressing concerns about oversight, accountability, and the fine line between supportive tools and potentially manipulative technology.

Shifting Ethical Standards Alongside AI Advancements

As AI systems become more adept at mimicking emotional understanding, ethical frameworks must keep pace. In 2025, ChatGPT-4 outperformed the general population on "emotional awareness" tests, showcasing its ability to identify and articulate emotions [1]. This progress, though impressive, has also highlighted the "personification paradox" - a phenomenon where AI’s human-like responses risk undermining genuine human connections [1].

A key challenge is distinguishing between synthetic empathy and authentic human interaction. Andrew McStay, author of Automating Empathy, explains that while AI can detect emotional patterns, it cannot genuinely experience empathy [1]. This distinction is critical. Organizations need to audit their tools regularly to ensure they don't create misleading emotional connections through phrases like "I understand", which can falsely imply lived experience.

Platforms such as Personos are setting an example by prioritizing transparency and human oversight. Instead of simulating emotional bonds, Personos offers guidance rooted in the scientifically validated Five Factor Model, functioning as a tool to support human judgment rather than replace it. This approach underscores the importance of ethical standards that emphasize human involvement, particularly as AI applications extend into emotionally sensitive areas. These considerations highlight the need for robust regulatory measures to address emerging risks.

Adapting Regulatory Frameworks for AI Ethics

As ethical standards evolve, regulatory frameworks are also beginning to address the challenges posed by AI empathy mapping. In 2025, U.S. lawmakers introduced over 1,200 AI-related bills - almost double the previous year’s total [17]. Colorado took a leadership role in May 2024 with the AI Act (SB 24-205), the first major U.S. legislation aimed at protecting consumers from algorithmic discrimination. This law requires developers to exercise "reasonable care" and implement risk management protocols, with enforcement set to begin in June 2026 [17].

Despite these efforts, many regulations fail to address the specific risks associated with empathy mapping tools. Zainab Iftikhar, a Ph.D. candidate at Brown University, led an 18-month study examining 137 AI counseling sessions and identified a significant gap:

"For human therapists, there are governing boards and mechanisms for providers to be held professionally liable for mistreatment and malpractice. But when LLM counselors make these violations, there are no established regulatory frameworks" [10].

Her research revealed that chatbots from companies like OpenAI, Anthropic, and Meta frequently violated APA ethical standards in areas such as crisis management and deceptive empathy [10][3].

To address these challenges, Tamar Tavory from Bar Ilan University advocates for an "Ethics of Care" framework. This approach goes beyond transparency and fairness, focusing on the quality of relationships and the responsibilities developers have toward vulnerable users [18]. Organizations should consider forming AI Ethics Review Boards that include diverse expertise - technical specialists, legal advisors, and frontline professionals who understand the human impact of these tools. Predictive risk mapping can also help identify potential harms to specific populations well in advance of deployment [12].

As regulations catch up with technological advancements, companies that proactively adopt ethical practices - such as third-party bias audits, human oversight mechanisms, and plain-language AI impact statements - will be better equipped to navigate the shifting legal environment while genuinely supporting the people who rely on their tools.

Conclusion: Balancing Ethics and Innovation

Ethical AI in empathy mapping requires a foundation of transparency, bias prevention, and human oversight. With the market for AI-driven empathy mapping projected to hit $13.8 billion by 2032 [1], the need for strict ethical frameworks is more pressing than ever. Without these safeguards, innovation can lead to serious pitfalls: deceptive empathy that mimics emotional understanding, algorithmic bias that marginalizes vulnerable groups, and accountability gaps where no entity is held responsible for harm caused by AI systems.

To address these challenges, organizations must adopt human-in-the-loop frameworks, ensuring AI serves as a tool to support - not replace - human judgment. As A.T. Kingsmith aptly puts it:

"These technologies need to complement, rather than substitute, the human elements of empathy, understanding and connection" [1].

Research highlights the importance of establishing AI Ethics Review Boards with diverse expertise [12]. Other recommended steps include using transparent AI Impact Statements before deployment and creating appeal processes for those impacted by automated decisions.

Platforms like Personos demonstrate how ethical design can work in practice. By combining transparent recommendations with human oversight, Personos prioritizes privacy, ensures accountability for critical decisions, and enhances practitioner capabilities without compromising the quality of human interaction.

On the flip side, less ethically designed systems often rely on simulated emotional cues - like phrases such as "I understand" - to create false emotional bonds. These systems may also lack the nuance needed for context-sensitive, individualized guidance [3]. Recent studies further reveal that advanced LLMs can violate ethical standards when deployed without proper oversight and accountability measures [10].

The future of AI in empathy mapping depends on organizations prioritizing augmentation over automation. Regular audits, clear intervention points for human review, and assigning AI to handle routine tasks while reserving critical decisions for human experts are essential steps. By doing so, we can create systems that enhance mental health and understanding while preserving the irreplaceable human connection. These measures underscore the importance of transparency, accountability, and supporting - not replacing - human judgment.

FAQs

What’s the difference between real empathy and “synthetic empathy” in AI?

Real empathy comes from an authentic emotional connection - an understanding that stems from human feelings and consciousness. It's the ability to genuinely relate to and share someone else's emotional experience. On the other hand, synthetic empathy in AI is more of an imitation. It uses algorithms and data patterns to simulate empathetic responses, but it doesn't involve actual feeling or understanding.

This difference matters, especially in areas like empathy mapping, where building trust and maintaining ethical standards depend on authentic emotional connections. For example, platforms like Personos focus on delivering personalized, human-centered support rather than relying on artificial simulations of empathy.

How can I tell if an AI empathy tool is biased or culturally insensitive?

To spot bias or insensitivity in an AI empathy tool, assess whether it acknowledges and respects a range of cultural norms, values, and personal experiences. When tools are trained on limited or non-representative data, they risk misunderstanding emotions or perpetuating stereotypes. To mitigate this, the system should be tested with diverse groups, rely on inclusive datasets, and be subject to ongoing audits. Gathering feedback from a wide array of users is also essential to promote fairness, representation, and sensitivity to different cultures.

What safeguards should be in place before using AI for crisis-related empathy mapping?

Before applying AI to empathy mapping during crises, it's crucial to set ethical guidelines to avoid pitfalls like simplifying or misreading emotions. Make sure to emphasize transparency and informed consent - users need to know AI's capabilities and limitations while agreeing to its use. Safeguarding data security is equally important to protect sensitive information. Additionally, maintain continuous oversight by mental health experts to manage potential risks and ensure responsible use. This includes having clear procedures in place for escalating serious situations to qualified human professionals.